The “Safest Legal Way to Use AI” Playbook

Part 2 of the topic nobody wants to talk about. The legality of AI and the risks.

THANK YOU to this week’s sponsors: Profound and North Star Inbound.

Welcome back to the lunch table! This week, we finish the two-part series discussing AI and the legality behind using it. If you haven’t read part 1, I recommend reading it first.

This newsletter (again, largely written by Cari O’Brien, JD) addresses what we know, existing legal cases, and a 7-step playbook focusing on the Safest Legal Way to Use AI.

This Week’s #SEOForLunch is sponsored by Profound

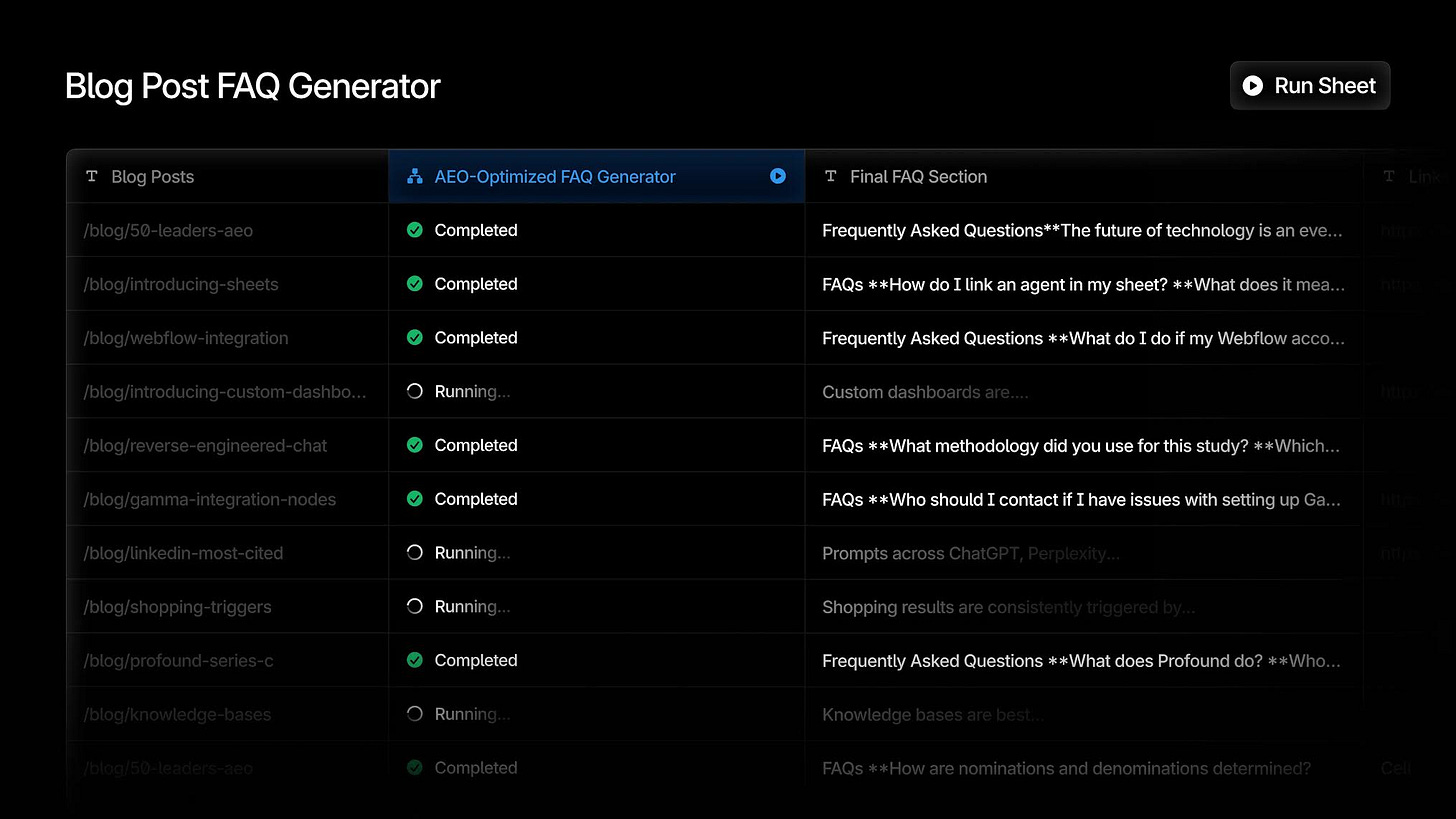

Profound Sheets: Marketing Agents At Scale

Sheets is the new connective layer for your marketing program. Profound data flows in, Agents do the work, and content flows out to your CMS. Here’s what you can build:

Weekly AEO health reports

Bulk content refresh flows

Localization at scale, and much more

What We Learned From Last Week’s Newsletter

AI law is still taking shape, but accountability has already arrived. Regulators and courts are not waiting for entirely new rules. Instead, they are applying existing laws on intellectual property, privacy, contracts, discrimination, and liability to today’s use of AI.

For organizations, this means AI does not introduce a myriad of unfamiliar legal risks so much as it accelerates familiar ones. How AI is trained, deployed, governed, and explained already matters, and companies are being held responsible now, not later.

What This Likely Means for the Future

No one can tell you how any of this is actually going to play out. That said, where things stand does help shed light on how the legal landscape will impact your day-to-day business operations in the near future.

More Lawsuits, Across More Industries

Expect litigation to increase as AI use expands. Courts will play a central role in clarifying how existing laws apply to new AI‑driven scenarios, especially where regulations are vague or silent. These cases will help define boundaries, but they will also introduce cost, delay, and uncertainty for businesses caught in the middle.

More Formal Requirements & Internal Guardrails

Marketing organizations should plan for growing expectations around disclosures, documentation, and process. This includes clearer customer‑facing policies, internal SOPs governing AI use, bias audits, risk assessments, and incident response plans. In practice, responsible AI use will increasingly look like a compliance discipline, not an ad‑hoc experiment.

A Growing Need for Privacy & Data Protection Expertise

AI tools are evolving quickly, and they also make malicious activity easier and more scalable. That combination raises the stakes. Companies will need dedicated teams or well-defined ownership to monitor developments, maintain policies, and respond to incidents as they arise. Privacy and data protection will be core operational functions, not side considerations.

Ongoing Uncertainty, by Default

There is no final version of AI regulation on the horizon. Rules will continue to change, sometimes unevenly and unpredictably. The most resilient organizations will be those that plan for what they can, learn from early missteps, and remain flexible enough to adapt as expectations shift.

What You Can Do? Introducing The “Safest Legal Way to Use AI” Playbook!

Listen, we know what you’re thinking: BORING. Legal guardrails, policies, and governance are not shiny or sexy. Experimentation is. Speed is. Seeing what these tools can do is genuinely exciting. But we care more about you and your company coming out ahead than chasing short‑term wins that create long‑term problems.

This playbook isn’t about slowing innovation. It’s about protecting your team, your work, and your organization so you can use AI confidently, responsibly, and without unnecessary risk getting in the way. With that, let’s dive in.

1. Start With a Clear AI Use Policy

Every organization should have a short, plain-language policy that explains how AI tools can and cannot be used. The policy need not be overly complex, but it should be clear enough that any team member can read it and follow it as intended.

A strong policy usually includes:

Which tools are approved for use (and which have been rejected and why)

What types of data can be entered into AI systems

When human review is required before publishing AI-generated content

Situations where AI use should be avoided entirely

A prompt library, along with prohibited prompts

As you build your policy, remember to include an approved tools list, a list of prohibited tools, an acknowledgment form for employees to sign, and disclosure guidance for when AI-generated content is used.

These are the pieces that put policy into action.

2. Separate AI Workflows by Risk Level

Not every AI use case carries the same level of risk, so treating everything the same either slows your team down or leaves your company exposed. A simple way to manage this is to think in terms of a three-lane highway:

Green lane: brainstorming, outlines, tone variations (no sensitive data)

Yellow lane: internal drafts + summaries (allowed data only, reviewed)

Red lane: hiring decisions, regulated info, public claims, legal advice, medical claims (requires legal/privacy review + logging)

This approach allows your team to move more fluidly, slowing down only where necessary based on defined goals. The key term here is “defined.”

You’ll need to clearly define which activities fall under each lane, and what level of review or approval is required before anything moves forward.

3. Use “Clean Inputs” and “Clean Outputs.”

Most AI risk actually starts at the input stage. If sensitive, protected, or proprietary data goes in, you lose control over where it may appear later. That’s why it’s critical to set guardrails in place around both what goes in and what comes out.

Example guardrails include:

Avoid pasting proprietary documents into consumer AI tools

Use trusted internal knowledge sources where possible

Require citations or sources for factual AI-generated content

Clean inputs reduce risk. Clean outputs protect your brand.

4. Review AI Vendors & Tools Carefully

It’s easy to get caught up in the excitement of new AI tools. But the desire to join in often leads organizations to adopt tools before proper evaluation. This is where risk starts to creep in.

Every external tool or vendor you bring into your company also brings its data practices, dependencies, and potential exposures. Make it a policy to ask questions that identify risk before adopting a new tool or hiring a new vendor.

Ask and then document the answers (ideally in your vendor contracts) to questions such as:

Does the vendor train their models on customer data?

How long is data retained?

What security standards are in place (SOC 2, ISO 27001)?

What happens if an IP or data breach issue arises?

Remember, risk doesn’t happen in a vacuum or at any single point in time. Review tools and vendors regularly.

5. Bake in Human Oversight & Review

AI is great for accelerating work, but it doesn’t grant a free pass from accountability. At key points in your workflows, there should be clear expectations around when a human needs to step in, review, and take responsibility for the outcome.

This is especially important for:

Public-facing content

Customer communications

Regulated or high-stakes decisions

Keeping a human in the loop isn’t about slowing things down. It’s about ensuring that speed doesn’t come at the cost of accuracy, fairness, or trust.

6. Document Your Governance

“Radical transparency” is the phrase of the day in many AI, data protection, and privacy conversations. What that really boils down to is simply being able to show your work. Because when something goes wrong, or when a regulator comes knocking, you’ll need to be able to clearly show how your organization responsibly uses AI.

To that end, we recommend every organization:

Maintain an AI tool inventory

Document risk assessments for higher-risk use cases

Record review steps for public-facing AI outputs

Create an incident response plan for AI-generated errors

This documentation protects your business. But perhaps more importantly, it provides your team with the clarity and consistency it needs to perform well.

7. Train Your Team

Once you have the documentation in place, you have to take the next step to ensure your team understands how to apply your policies and procedures. Training should equip your team to identify risks, respond to threats, and otherwise use AI tools in line with your expectations.

At a minimum, your training should ensure your team knows how to:

Use approved AI tools effectively

Recognize phishing attempts, deepfakes, and other AI-driven more

Protect work computers against AI-driven information disclosure attacks

Build AI tools like chatbots to protect against prompt injections

By bolstering your team’s AI proficiencies, you’re setting your company apart from the competition and eliminating a great deal of risk along the way

A special THANK YOU to Cari for all the heavy lifting on these two posts. She put in the heavy work, actually writing vs prompting an AI and blindly submitting. Just like you do… Right!?

~Nick

Turn Your Blog Into Your Biggest Sales Engine

North Star Inbound focuses on the 10% of pages that drive the most leads and revenue, turning content into a real sales engine.

If you need SEO that pays for itself fast, start here