Legal Consequences of Using AI (PT1)

Are you ready to admit that AI might be a liability?

THANK YOU to this week’s sponsors: Profound and North Star Inbound. Please support these sponsors as they keep this weekly content free.

For the first time in the 8+ years of writing this newsletter, I’ve invited a guest to write. A huge shout-out to Cari O'Brien, JD (yeah, she’s a fancy lawyer!) for working with me on this post. It’s one that is so critical to business, yet people don’t seem to write about what can be scary… So you bet(cha) we’ll post it here on the #SEOForLunch. In fact, this is so critical that we're dedicating two issues to it. Read part one below, and stay tuned for part 2 next week!

First, let’s talk more about how to not get sued, go to jail (don’t collect $200), or be known as the copycat stealer of your industry.

This week’s newsletter is sponsored by Profound

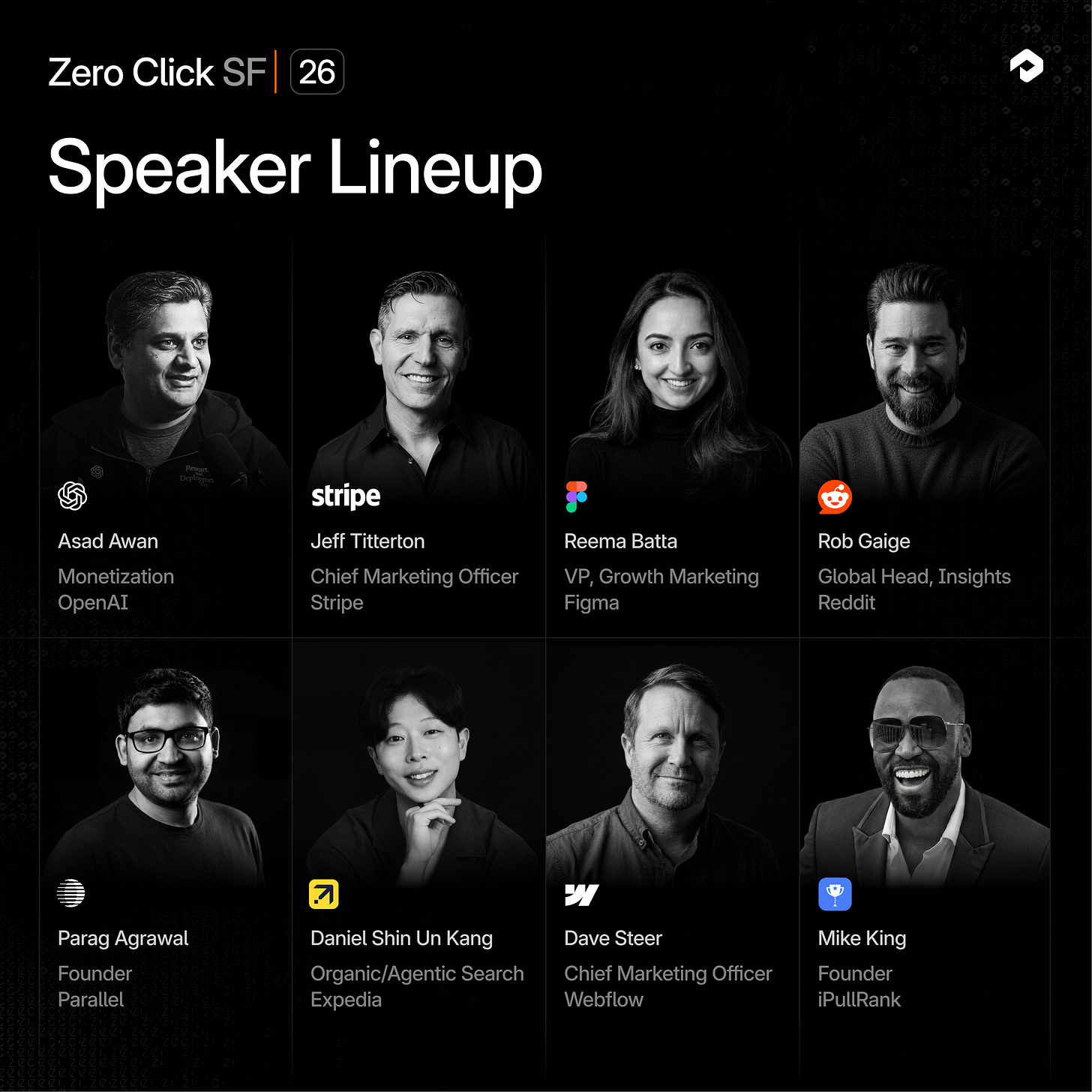

You’re Invited to Profound’s Zero Click San Francisco Event!

Join Profound on April 8th and leaders from OpenAI, Stripe, Figma, Expedia, Reddit, and Webflow to learn about the future of AI search from the people building it.

Tickets are free but spaces are limited, request your spot below.

The Legal Side of AI Everyone Is Ignoring

AI regulations are still in their infancy. Europe has taken the lead with the EU Artificial Intelligence Act. In the United States, nearly 20 states have passed some form of AI legislation. At the same time, federal policymakers have signaled interest in limiting state-level regulation to keep the overall regulatory environment relatively light, as shown by the recent AI policy wishlist published by the White House.

Regardless of how quickly new regulations emerge, one thing is clear: AI isn’t reinventing the legal landscape; it’s accelerating it. Most AI risks trace back to familiar areas like intellectual property, privacy, contracts, consumer protection, discrimination, and liability when things go wrong.

So instead of thinking of “AI law” as something entirely new, it’s more helpful to look at the core business areas where these familiar risks tend to arise.

The 9 Areas Where AI Risk Lives in an Organization

The following nine areas are where most AI risk shows up inside a business. You don’t have to be a legal expert to manage these risks; you just have to ask the right question in each area to get to the heart of the matter and address it well.

1. Intellectual Property

The One Question: Who owns the work, and are we accidentally using someone else’s intellectual property without realizing it?

Ownership is still evolving in the AI context, but we do have some early guidance. The U.S. Copyright Office (USCO) stepped in early, stating that works created purely by AI are not protected. Meaningful human authorship is required. If a human plays a substantial creative role in shaping an AI tool’s output, protection may still be possible. Such determinations are to happen on a case-by-case basis.

On the patent side, the U.S. Patent and Trademark Office’s (USPTO’s) revised guidelines show a slightly more flexible position, stating that patentability is still possible if a human conceived the idea but used AI to make the idea come to life. That said, these guidelines haven’t been tested in court, so it’s unclear how they will stand up against real-world applications.

At the same time, concerns about infringement continue to grow. Many generative AI tools were trained, at least to some extent, on protected materials, and we’re watching this tension play out in real time. We’ve seen case filing after case filing, including The New York Times lawsuit against OpenAI and Microsoft, which alleges that the AI tools reproduced substantial portions of copyrighted content without permission.

This creates two practical risks:

Using AI outputs that unintentionally incorporate protected material

Struggling to prove ownership over work that lacks sufficient human input

If you’re creating content you want to own, protect, or commercialize, keeping a human meaningfully involved isn’t optional—it’s essential.

2. Advertising & Misinformation

The One Question: What are we saying, and is it accurate?

AI tools make it dramatically easier to create content at scale, which is a clear upside. The tradeoff, however, is that these tools also make it easier to publish something that’s misleading or incorrect.

We saw in real time how costly such errors can be. During Google Bard’s product demonstration, the tool incorrectly stated that the James Webb Space Telescope had taken the first images of an exoplanet. This one error cost Google $100 billion in market value because it raised serious questions about the credibility of its tool.

AI hallucinations can show up in subtle ways, from incorrect data, fabricated citations, false logic, exaggerated claims, and confident but flawed reasoning. When such content is published under your brand, it becomes your responsibility. And while your company may not have as much at stake financially as Google does, reputationally, one mistake can absolutely cost you.

3. Privacy & Personal Data

The One Question: Are we using people’s personal information in ways that are transparent, lawful, and respectful?

Consumer expectations around data privacy have shifted dramatically—and the law is catching up. Frameworks like the EU’s GDPR, Canada’s PIPEDA, and California’s CCPA have established new standards around how personal data is collected, used, and disclosed.

While marketers have adapted (begrudgingly, to a degree), personal data is still at the core of how many campaigns function. That data includes cookies, pixels, contact and behavioral data, purchase and payment information, and so much more. And the risks don’t just arise in collecting the data; they also arise in failing to clearly communicate what you’re doing with it.

Regulators have already shown us how serious they take these matters. In ChatGPT’s early days, Italy blocked the app countrywide over concerns about how personal data was being collected and processed under GDPR. The Italian government only lifted that ban after OpenAI added more privacy safeguards.

At a practical level, your company needs a clear policy on the collection and handling of private consumer data. You need to know what data you’re collecting, where that data is going, and who is handling it. Your team needs to know which privacy laws apply to your company and its customers, and how to respond if a customer makes a request under those laws. If you can’t quickly and clearly communicate that your company knows all this, now’s the time to start taking action so you limit your exposure.

4. Data Protection & Trade Secrets

The One Question: Are we keeping sensitive data, internal knowledge, and company secrets out of places they shouldn’t go?

When we talk about data protection, the focus often stays on customer data. Just as important, however, is company data, especially trade secrets and proprietary information.

AI tools introduce a new layer of risk here, particularly when employees use unapproved tools or free versions that lack privacy and security guardrails. Samsung learned this lesson the hard way. A couple of engineers pasted proprietary source code into ChatGPT while troubleshooting issues. That data was then transmitted to an external system, which would use the data to train its models and potentially deliver replicated source code in future outputs.

This isn’t a case of bad actors; it’s a case of bad workflows and SOPs. If your team is using AI tools without clear guardrails, you risk any team member unintentionally disclosing confidential business information, client data, or proprietary processes or code. And once that information goes out, it’s incredibly difficult to get it back.

5. Employment & Workplace Fairness

The One Question: Could AI be influencing hiring, promotion, or evaluation decisions in ways that create bias or discrimination?

For years, companies have been relying on AI in hiring and HR processes, primarily to improve efficiency. But such efficiency doesn’t guarantee fairness.

Research and real-world examples have proven time and again that these tools bake in the prejudices and biases of their training data. One well-known example comes from Amazon, which scrapped its 2018 AI hiring tool that was found to downrank resumes that included indicators of applicants being women. In another case, iTutorGroup was held liable for damages after its AI-powered job-application software exhibited bias against older candidates.

It’s not that using AI in these instances is unacceptable. It’s just that companies using AI should not do so blindly. When it comes to having AI tools partake in decisions about people, your company needs to regularly audit the tools for bias, understand how the tool’s decisions are being made, and always keep a human in the loop.

6. Contracts & Customer Expectations

The One Question: Are our customer-facing agreements clear about how AI is used—and who’s responsible if something goes wrong?

AI-generated content isn’t just “content.” In many cases, it’s part of your customer experience, which carries great weight.

The Air Canada chatbot story offers a good example. A customer relied on information provided by an AI chatbot on the Air Canada website. The chatbot described a bereavement fare policy that didn’t actually exist. Air Canada refused to honor the policy; the customer sued. A Canadian tribunal ruled that the airline was responsible for the chatbot’s statements.

Your website, chatbots, automated content, AI-generated social media content, and so on can all be considered company-created and company-approved content. And if we follow the Canadian tribunal’s logic, if the content lives on your platform, it’s your responsibility.

If customers rely on the content you provide to make decisions, you need to ensure that the content is accurate. You should also take care to clearly address how AI is used on your platform and where responsibility for it sits.

7. Vendor & AI Tool Risk

The One Question: Do we really understand the risks of the AI tools we’re bringing into the business?

Every AI tool you use comes with its own ecosystem: third-party integrations, underlying libraries, and data flows that aren’t always visible on the surface. If you don’t understand that ecosystem, you’re taking on risk. And no company, small or large, is immune.

In 2023, a bug in ChatGPT briefly allowed some users to see titles of other users’ chat histories and certain subscription payment details. The issue was traced to a bug in an open-source library used by OpenAI, highlighting how risk can live deep within a tool’s infrastructure.

This risk extends beyond the tools you choose to the vendors you work with. Which tools do your vendors use? How well do they understand the privacy and data protection policies that are in place? Do their practices align with yours? And if a vendor’s AI use leads to a problem, are you liable, or is the vendor liable?

Companies cannot blindly enter new vendor relationships or AI tool subscriptions. Initial assessments are necessary, as are ongoing reviews and, if necessary, corrective actions to remain compliant and limit risk.

8. Product Liability & AI Decision Risk

The One Question: If an AI system makes a mistake that affects customers or users, who is responsible?

AI systems redistribute risk in ways we can’t always predict. Zillow’s Zillow Offers program is a strong example. The company used automated algorithms to estimate home values and guide purchasing decisions. When those models misjudged market conditions, the company purchased homes at inflated prices, ultimately causing the company to lose hundreds of millions of dollars.

Zillow’s algorithms impacted external parties by inflating home prices. But its internal impacts were even harsher. It raised questions, including those relating to accountability. Who is at fault? And what consequences will the responsible parties face, if any?

These aren’t theoretical questions; they’re governance questions. And organizations that spend time addressing these questions upfront find it much easier to address solutions should a system make a mistake in the future.

9. Regulatory Compliance & Governance

The One Question: Are we keeping up with evolving rules, and can we demonstrate we’re using AI responsibly?

Regulators aren’t waiting for a comprehensive AI law to emerge. Unsteady, they’re applying existing frameworks as they can, and are already taking action.

The U.S. Securities and Exchange Commission (SEC) and Federal Trade Commission (FTC) have both brought enforcement actions against companies for failing to bake in proper guardrails around their use of AI. The SEC has charged numerous firms with making misleading statements about their use of AI or falsely advertising their AI capabilities (“AI washing”). The FTC has also issued numerous warnings to companies about overstating or misrepresenting their AI capabilities, as AI claims must be substantiated like any other marketing or advertising claims.

Enforcement is also expanding beyond messaging. The FTC took action against Rite Aid over its facial recognition technology, which produced thousands of false positive alerts and disproportionately impacted people of color.

This action, while important for consideration of disparate harm, signaled a shift in what regulators are looking for. It’s not just about what your AI systems do; it’s about how your organization governs data, vendors, and risk.

When regulators come calling, they won’t just ask what happened. They’ll ask how you govern it. And they’ll want the receipts.

STAY TUNED FOR PART TWO NEXT WEEK!

Where AI Actually Gets You in Trouble (real examples and practical risks)

New! Check out the poll below. I’d love to see your votes!

Thanks to North Star Inbound for also sponsoring this week’s issue!

North Star Inbound runs one integrated growth system:

Strategic SEO and GEO, Digital PR, and Conversion copywriting

Case study proof: our top 5 posts drove 144,712 sessions in a 9-post pilot and led the blog for conversions, including 224 conversions and 217 phone calls from one post!