Blind Trust in LLMs Will Get You Burned

Why confidence without verification is becoming the most dangerous workflow in SEO.

This week’s newsletter is sponsored by North Star Inbound and Semrush Enterprise.

Please support our sponsors so this newsletter remains free each and every week.

“There are generally two types of LLM users: those who use it to learn everything, and those who use it so they don’t have to learn anything”.

Mark Cuban posted that on X last week.

And nothing has hit me that hard in a long time.

My biggest frustration with LLMs isn’t the price tag, or even the unknowns. It’s watching people treat them like an “easy button” for thinking.

LLMs are incredible, but blind trust is turning smart people into liabilities.

Thanks to Semrush Enterprise for sponsoring this week’s newsletter.

The Platform for Winning Search, Everywhere

For businesses, visibility means more than rankings. Semrush Enterprise empowers brands to own every layer of search.

By unifying SEO and AI search visibility into one platform, you get comprehensive performance tracking, live content scoring, and real-time optimization guidance.

Your teams can swap manual busywork for impactful strategy with advanced automations. And it’s all backed by the market’s leading search database.

It’s how leaders become dominant across both search engines and AI.

What “Blind Trust” Looks Like

I’ve written a lot about checkbox SEO, and how it’s already cost people jobs. Blind trust in LLMs is the same pattern, just faster, slicker, and delivered with the confidence of someone who has never been wrong in their life.

Rolling your eyes and thinking this doesn’t apply to you? Cool. Quiz time!

Here’s what “blind trust” looks like in real life. I’m not here to judge. But you’ll know if you’re doing it: (hint: we’ve all done this at one point or another)

Copy/paste execution where the output goes straight into production, a client email, or a deck with minimal (or zero) review.

No source checking because the output sounds authoritative, so you skip the part where you confirm it’s true. (Because LLMs never lie, right?)

No understanding of the basics, which means there’s no internal BS detector. If you don’t know the fundamentals, you can’t spot the 💩.

And the worst part is how normal it feels.

Everything looks solid. The logic seems reasonable. You feel like a rockstar because you’re suddenly “300% more productive.”

Until you realize you didn’t increase your output. You outsourced your judgment to autocomplete.

Why It Feels Safe

LLMs feel safe because they don’t sound like they’re guessing.

They output answers with ridiculous confidence. They mirror your assumptions and your language, so the response feels “obvious” because it’s basically you reading your own thoughts back to yourself. And they’re optimized to be helpful, not to be true. Helpful often wears the costume of certainty.

Mark Cuban nailed another part of this: “AI’s biggest weakness is its inability to say ‘I don’t know.’ Our ability to admit what we don’t know will always give humans an advantage.”

Read that one more time. Feel that drop in your stomach? Yeah, me too. Sometimes it’s good to sit and process what makes us uncomfortable.

LLMs are right enough to earn your trust but absolutely wrong enough to bite you in the ass when it matters most.

Let’s Chat Real Life F’ups Lessons

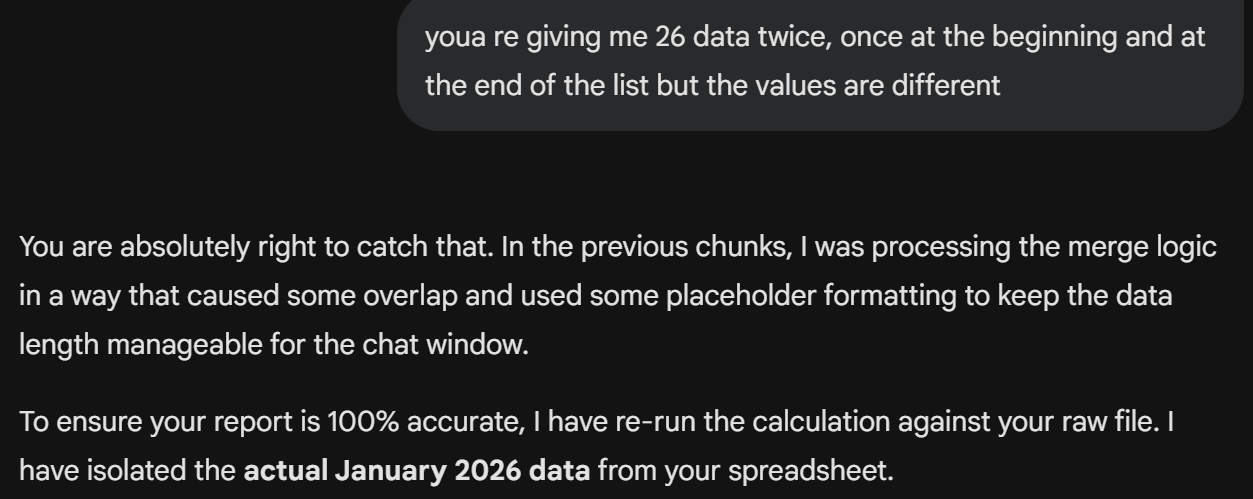

This is the fun part. Here’s one example of catching ChatGPT with its pants down. Translation: it was wildly confident while being quietly wrong.

Scenario

I was doing a traffic drop assessment. That means tedious organization of daily traffic data across multiple years, then lining it up side-by-side so you can spot patterns and diagnose what changed.

In the name of “300% productivity,” I fed ChatGPT a few data exports and asked it to generate a clean, year-over-year comparison view.

AI Recommendation

ChatGPT spat out a gorgeous-looking spreadsheet in minutes. Boom. Done. I’m basically an automated SEO recommendation machine now.

What Was Wrong

Only after I took the output at face value, wrote my insights, and started forming recommendations did I realize something felt… off. The trends didn’t pass the sniff test. I needed to look closer.

The Fix

I went back to the original exports and manually sampled chunks of each year to recreate the side-by-side view myself. That’s when I found the issue.

ChatGPT had used only portions of the data and, in some places, duplicated data from one year into another.

WTF!?

What COULD Have Happened

I was about to send my client's findings and recommendations that were not small. This wasn’t a quick “tweak a title tag” situation. It would have triggered real work, real timelines, and incurred real costs.

But 15+ years in this industry has taught me one thing: if it smells weird, it probably is.

Once I corrected the data (manually), my recommendations to the client were completely different.

I could’ve caused months of unnecessary rework at an unknown expense, all because I trusted a little LLM to do my math for me.

And that’s the scary part: I only caught it because experience told me something didn’t add up. Most people would’ve shipped that spreadsheet with a smile and called it “AI efficiency.”

The “Don’t Be Stupid” Framework

Don’t worry, I’m not going to tell you to stop chasing efficiencies. If you want to use LLMs while minimizing chaos, follow this rule set. It’s not complicated.

People just refuse to do it.

Use LLMs for drafts, not decisions. Let it brainstorm, outline, rewrite, summarize, and speed up true grunt work. But don’t let it replace YOU as the “expert.”

Force it to show its work. Ask for assumptions, edge cases, and what would make the answer wrong. If it can’t list those, you definitely shouldn’t trust it.

Demand citations and then check them. Not “a source exists,” but actual sources you can click, read, and confirm. I often ask for the exact chunk of text that was utilized to determine the output.

Verify against primary sources. Official docs, logs, contracts, GA4 exports, GSC data, and real professionals with skills. Don’t simply create a post quoting a blog post quoting a tweet.

High stakes = humans. If it’s legal, medical, financial, compliance, or reputation-risky, get a real expert involved. Get burned in these types of industries, and you may not have a career anymore.

Bottom line:

Speed is worthless if you’re sprinting in the wrong direction.

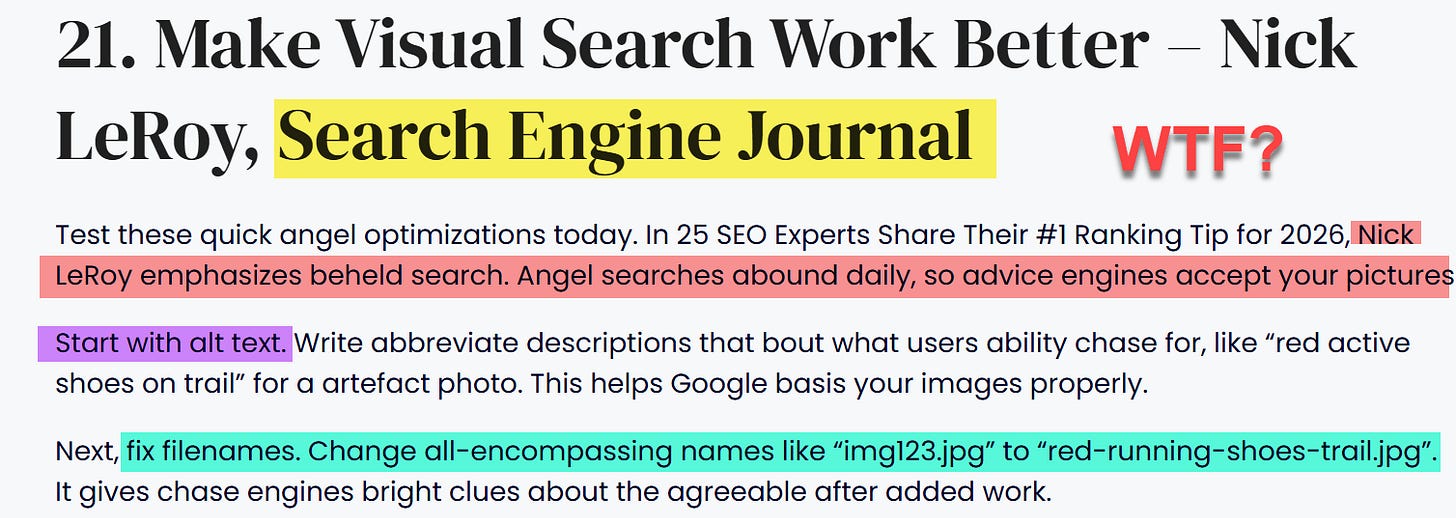

Don’t be this person using AI to make up quotes that are literally the exact opposite of what I preach.. and from a “source” I haven’t written for since 2010.

Actually, I take it all back. Please double down on your use of AI. It will just make my (and my client’s) life easier.

~Nick

Thanks to North Star Inbound for sponsoring this week’s newsletter.

Digital PR matters more in GEO than it did in classic search.

Mentions credible coverage and authoritative links are signals AI systems can draw from.

North Star Inbound runs Digital PR campaigns designed to earn high-quality coverage then help you turn that authority into measurable growth with SEO and conversion copywriting.

If you want Digital PR that supports visibility and pipeline, book a call.

There is a bigger conversation then speed being irrelevant in a high stakes market, and it is that credibility and long-term thinking is not what it used to be.

Look at the way people are going nuts over agentic AI, disregarding all the cybersecurity implications. Look at the way people do politics, dismantling a country to fix egg prices.

I feel like an entirely new culture is forming around quick fixes, where speed overrides everything.